Improving Mushroom Pose Estimation with Synthetic Data Scaling and Multi-View Attention Fusion

Overview

Automating mushroom harvesting remains a key challenge due to labor-intensive phenotypic analysis, delicate handling requirements, and the difficulty of obtaining large-scale annotated datasets under low-light, high-humidity environments. This work presents a synthetic-to-real pipeline for mushroom detection and 3D pose estimation using scalable synthetic data with minimal manual annotation. After generating synthetic images in Blender, a domain adaptation strategy is applied to reduce the visual gap between synthetic and real cultivation scenes. A transformer-based multi-view fusion network then combines lightweight visual features with camera pose embeddings to estimate key geometric parameters, including bottom center, top center, and maximum radius, from four top-view images. The system is designed to support precise robotic grasp planning for autonomous mushroom harvesting in data-limited agricultural environments.

Key Highlights

Synthetic-to-Real Learning

Build scalable training data in simulation and transfer it to real mushroom cultivation scenes with reduced annotation effort.

Multi-View Geometry Estimation

Fuse multiple top-view observations and camera poses to estimate grasp-oriented mushroom geometry under occlusion and dense growth.

Robotic Harvesting Interface

Provide pose-related outputs that can support downstream grasp planning and autonomous harvesting with robotic systems.

Method Pipeline

- Synthetic scene generation: Realistic mushroom plantation scenes are created in Blender with controllable variations in shape, pose, density, and illumination.

- Domain adaptation: Synthetic images are translated toward real visual appearance to narrow the sim-to-real gap.

- Multi-view acquisition: Multiple top-view images and corresponding camera pose information are collected for each mushroom instance.

- Pose estimation: A learning-based fusion model predicts key geometric attributes required for harvesting-oriented perception.

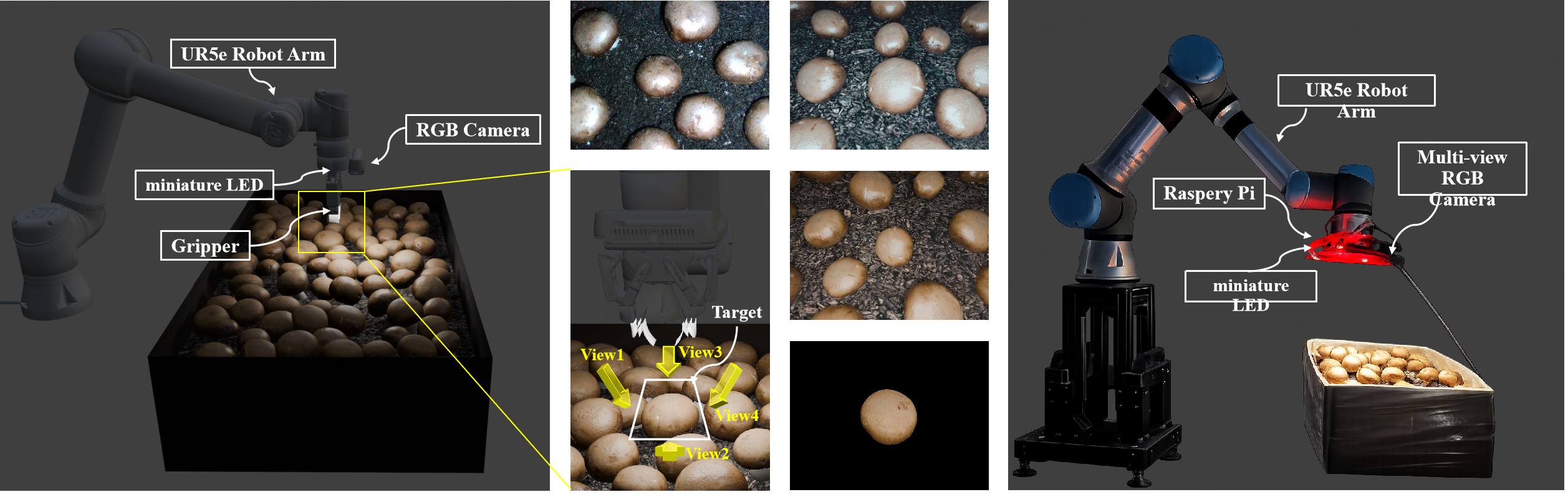

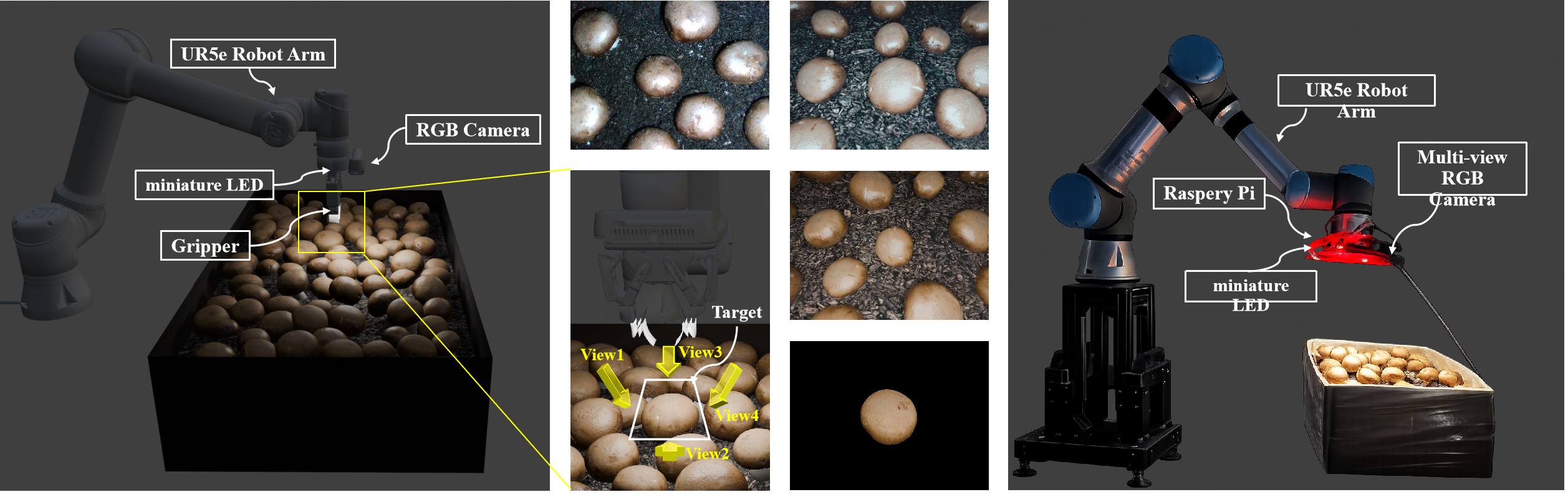

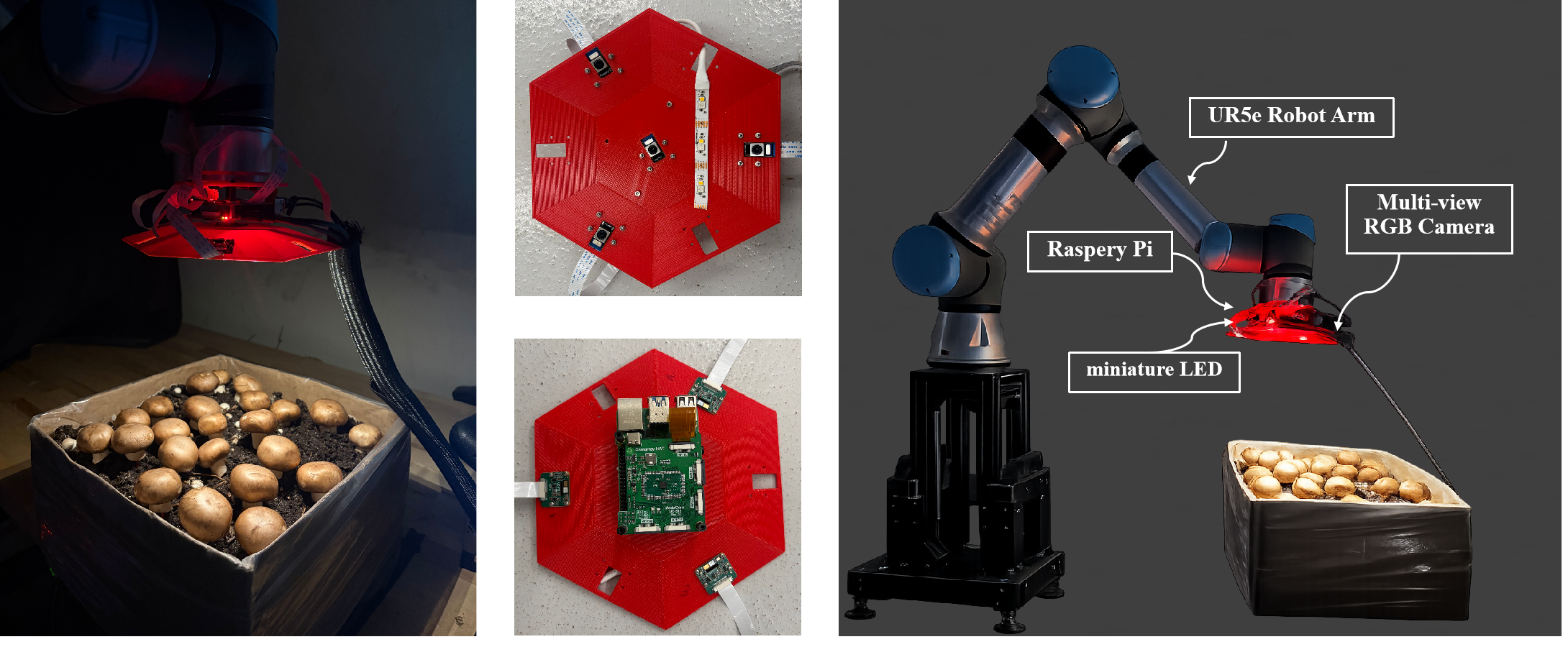

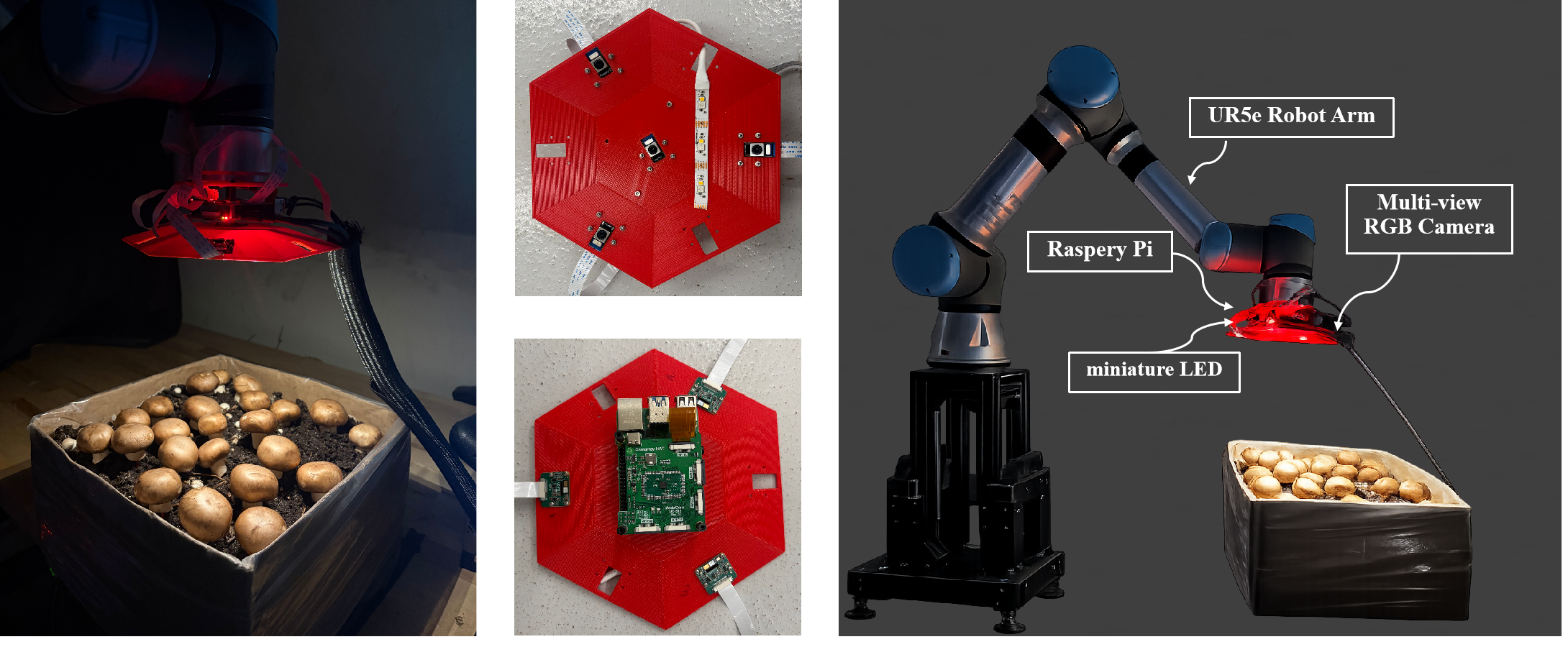

Figure1: Overview of the robotic multi-view imaging platform used for mushroom data acquisition. The system integrates a UR5e robotic arm, multi-view RGB cameras, Raspberry Pi control unit, and LED illumination to capture structured observations of mushroom cultivation scenes.

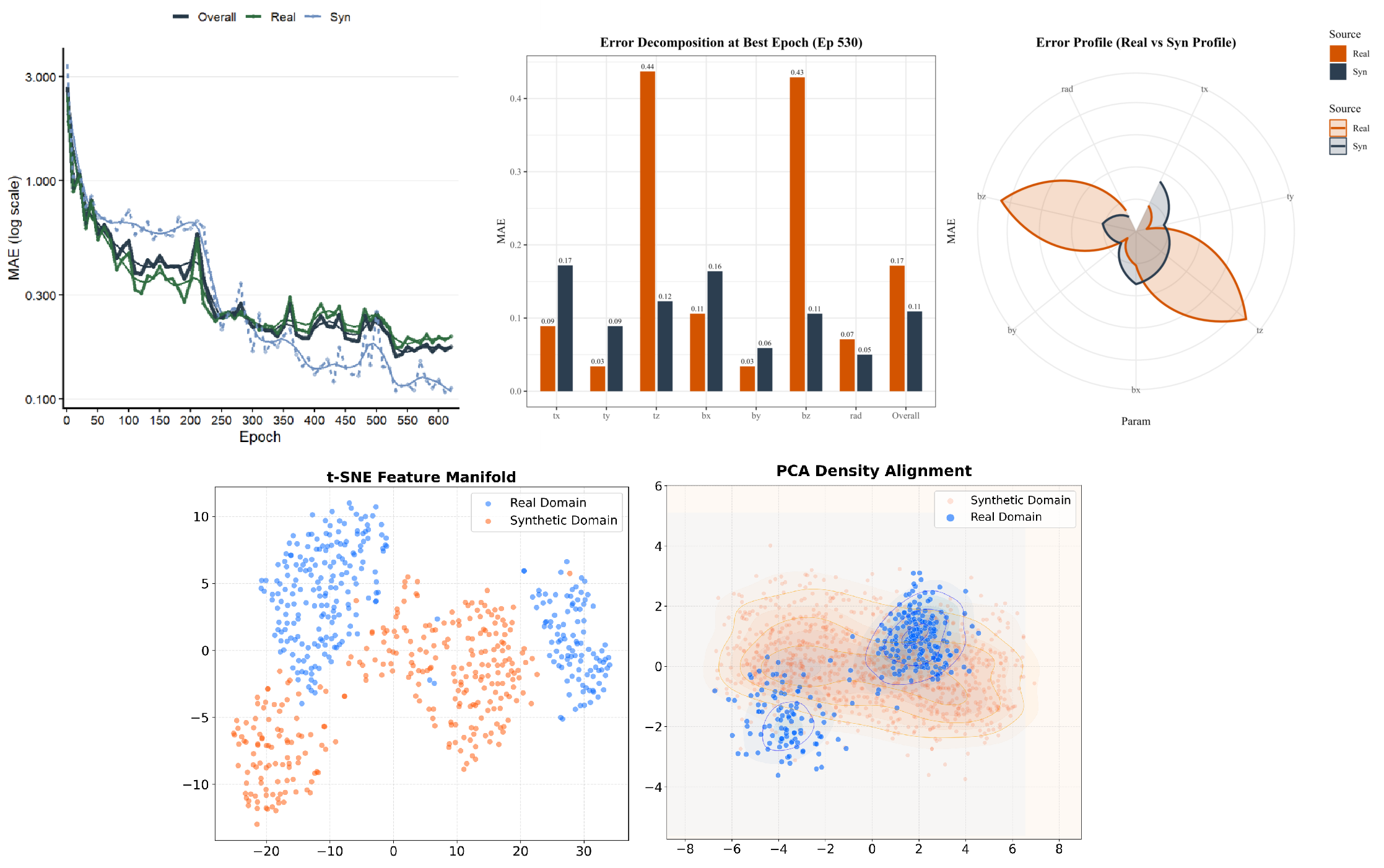

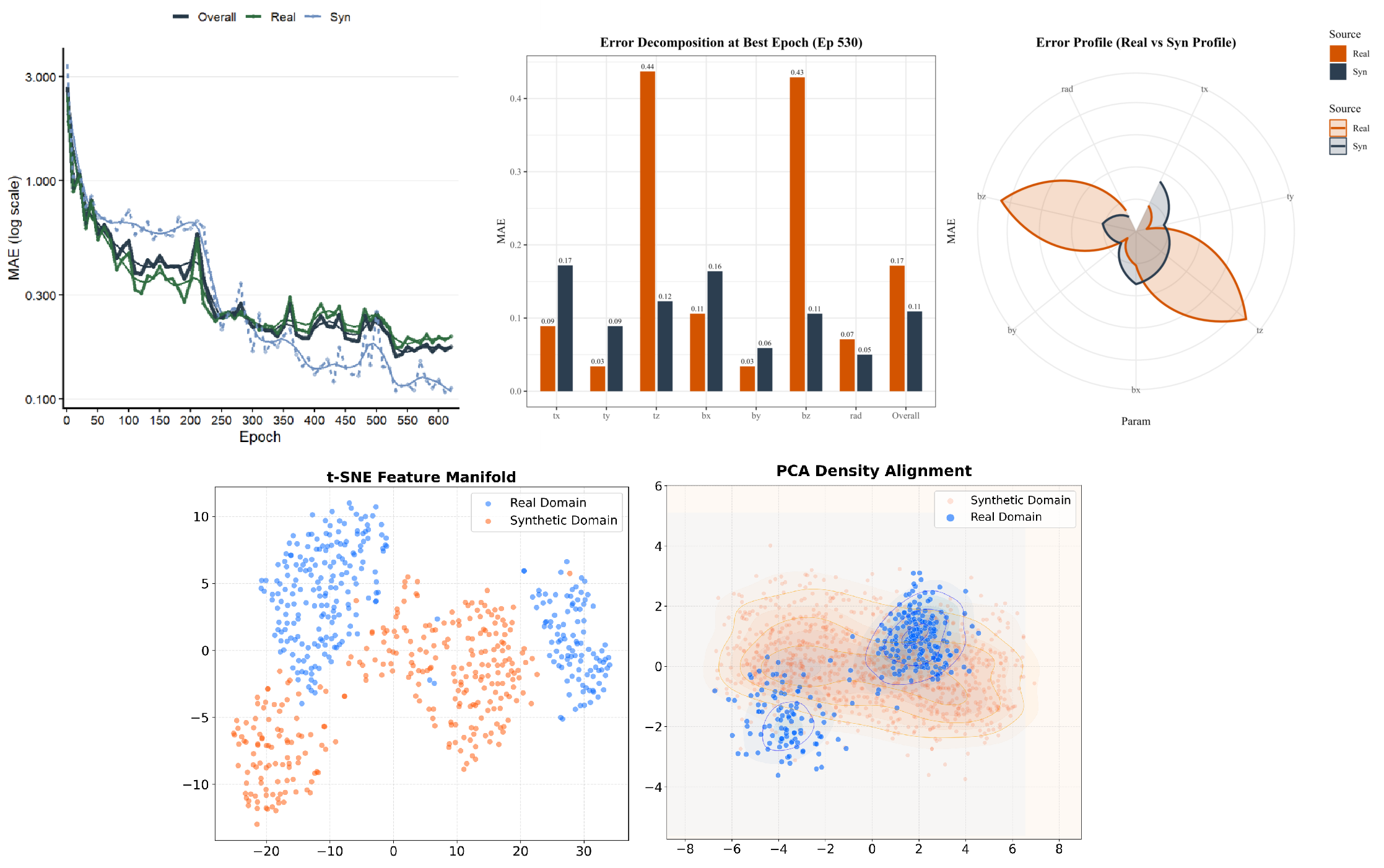

Figure2: Analysis of the synthetic-to-real training pipeline. Top: training error convergence and error decomposition across different pose parameters. Bottom: feature distribution visualization using t-SNE and PCA density alignment, illustrating the relationship between synthetic and real domains.

Results

Preliminary experiments show that the proposed framework improves pose estimation performance over simpler baselines while maintaining strong potential for robotic harvesting applications. In particular, multi-view fusion and synthetic data scaling contribute to better robustness in real cultivation scenes. These results suggest that simulation-driven perception is a practical direction for scalable mushroom harvesting automation.